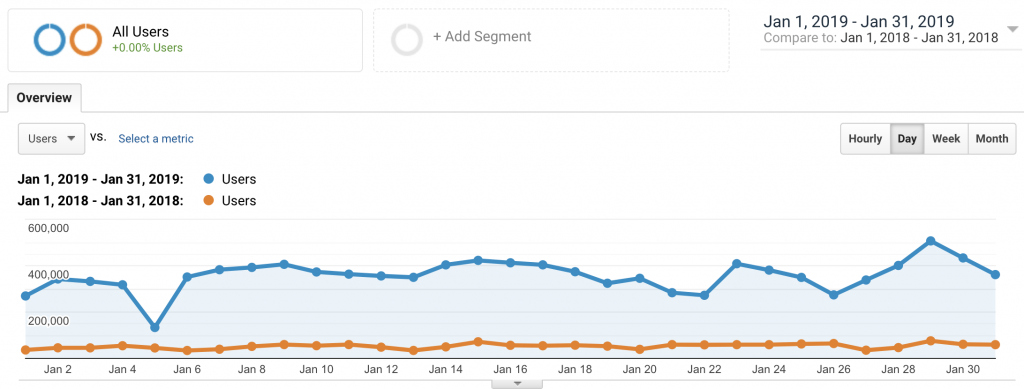

KMi was invited to give a presentation and run a workshop at the first Japanese Government run blockchain hackathon which happened in February in Tokyo.

It was sponsored by the Ministry of Economy, Trade and Industry (METI) and tickets where by application only and limited due to the venue size. There were about 98 participants in the event and 22 teams entered the Hackathon competition.

It ran over two weekends and was extremely well organised and managed by Recruit. The, first Saturday had the main presentations as well as workshops on Ethereum Solidity programming and Hyperledger Fabric.

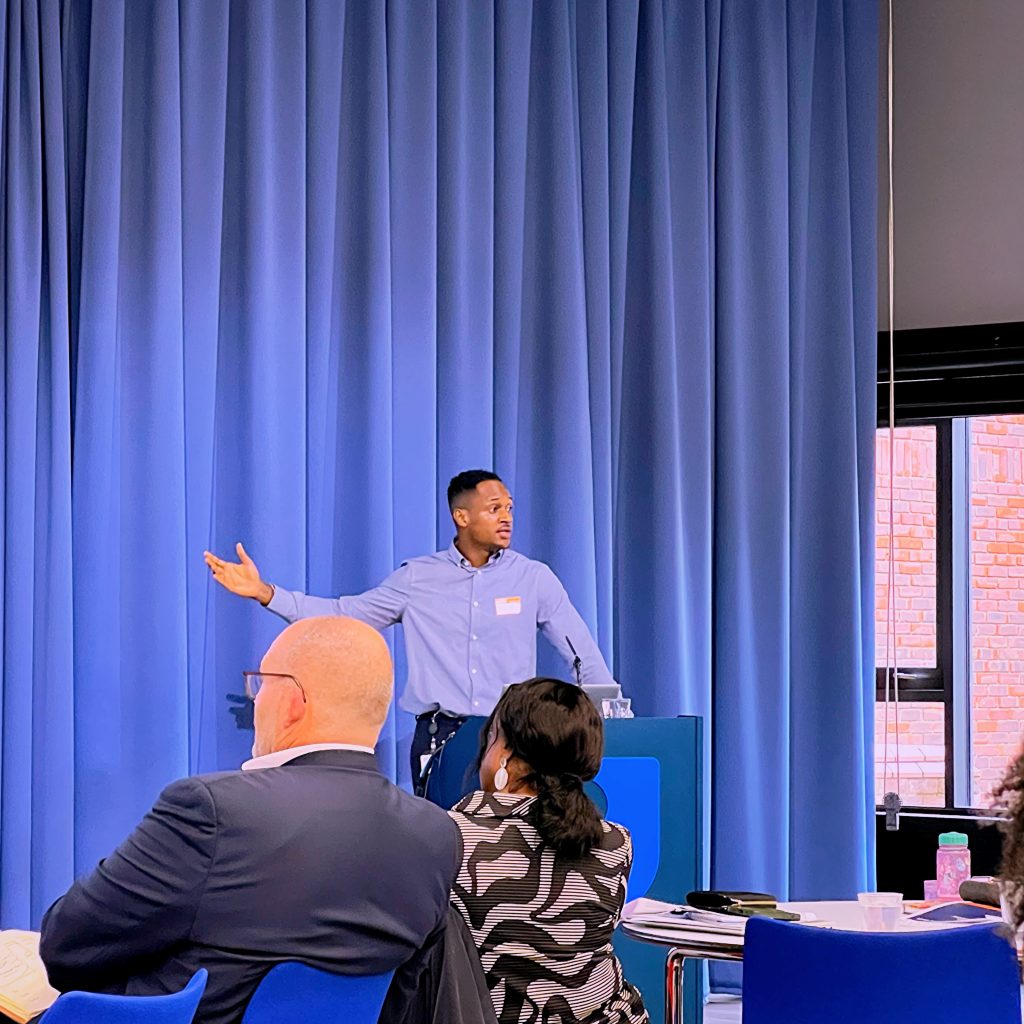

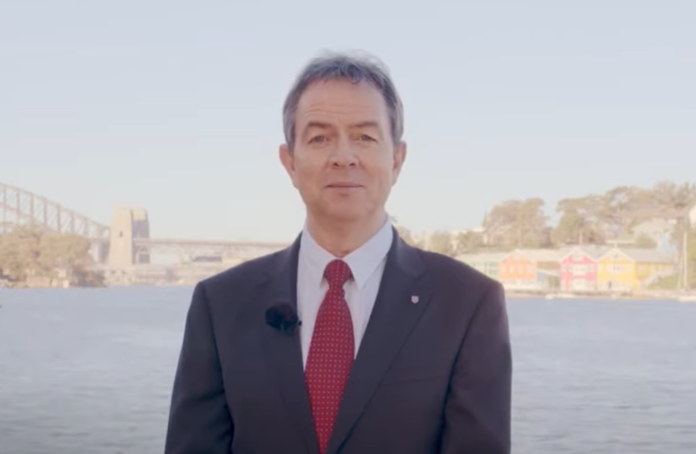

(Youngrok Kim, Senior Vice President from Recruit Strategic Partners, presenting the Hackathon schedule – kind permission given to use this photo – Copyright the Knowledge Media Institute at the Open University)

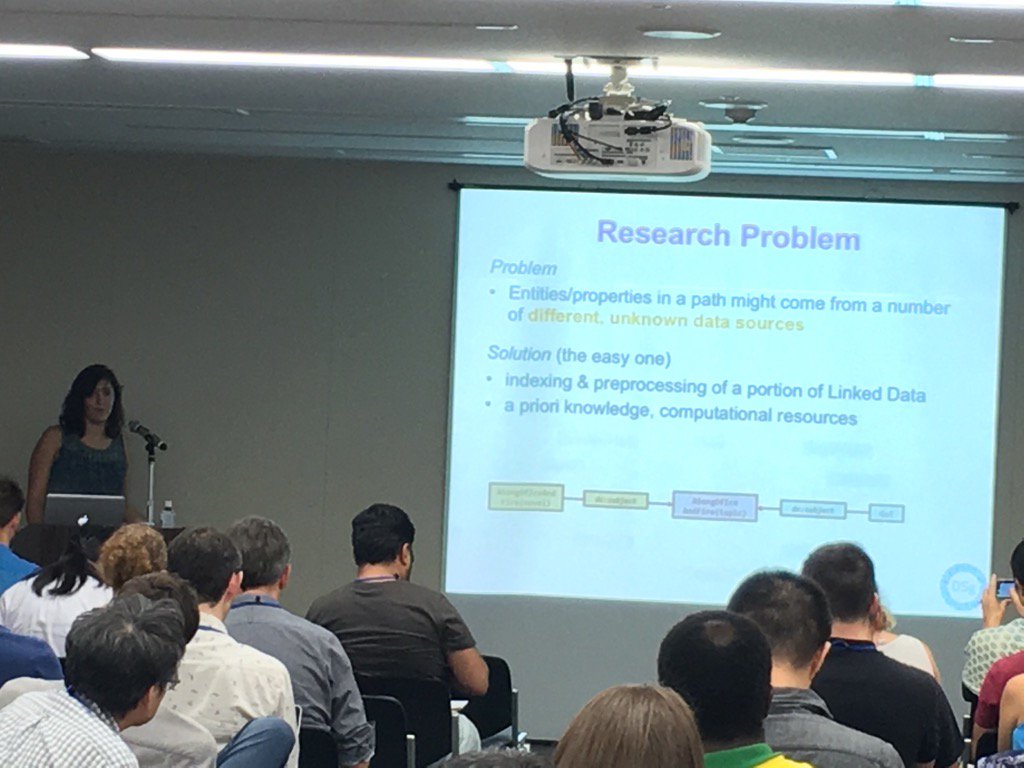

There were 12 external speakers presenting at the Hackathon. Eight where Japanese and four where from outside Japan. Of those from outside Japan, three attended in person and one presented remotely. There was myself from KMi talking about our Open Blockchain experiments in Education.

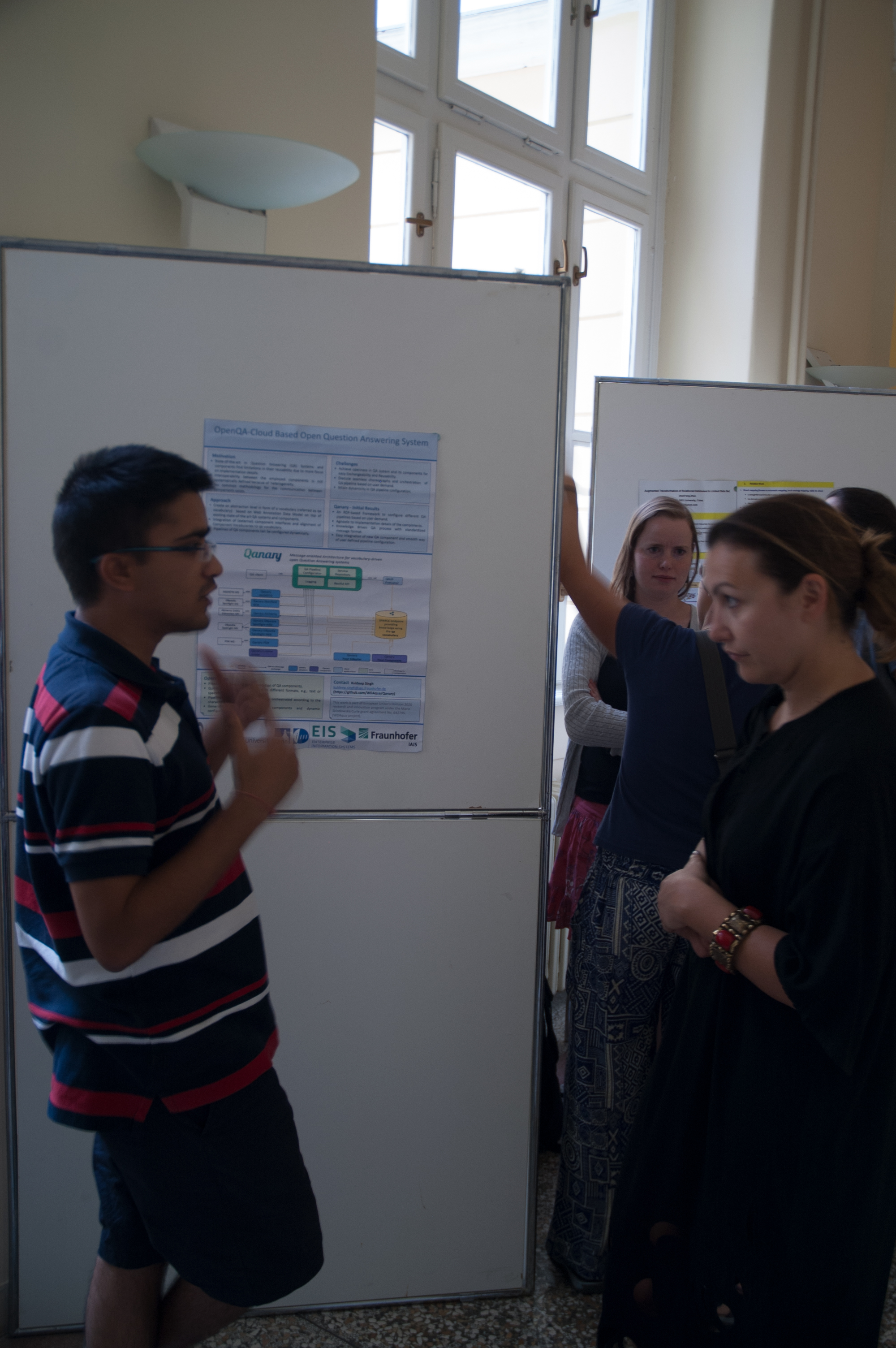

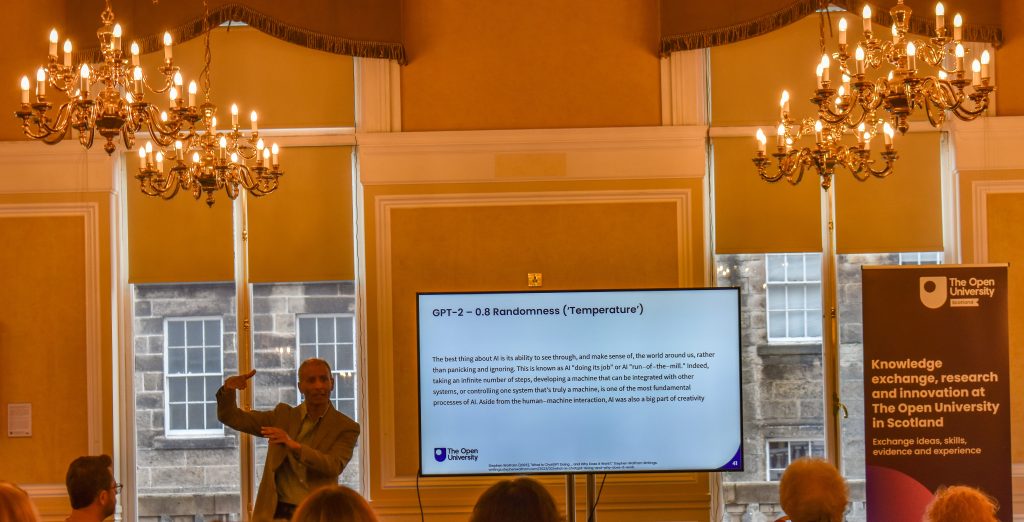

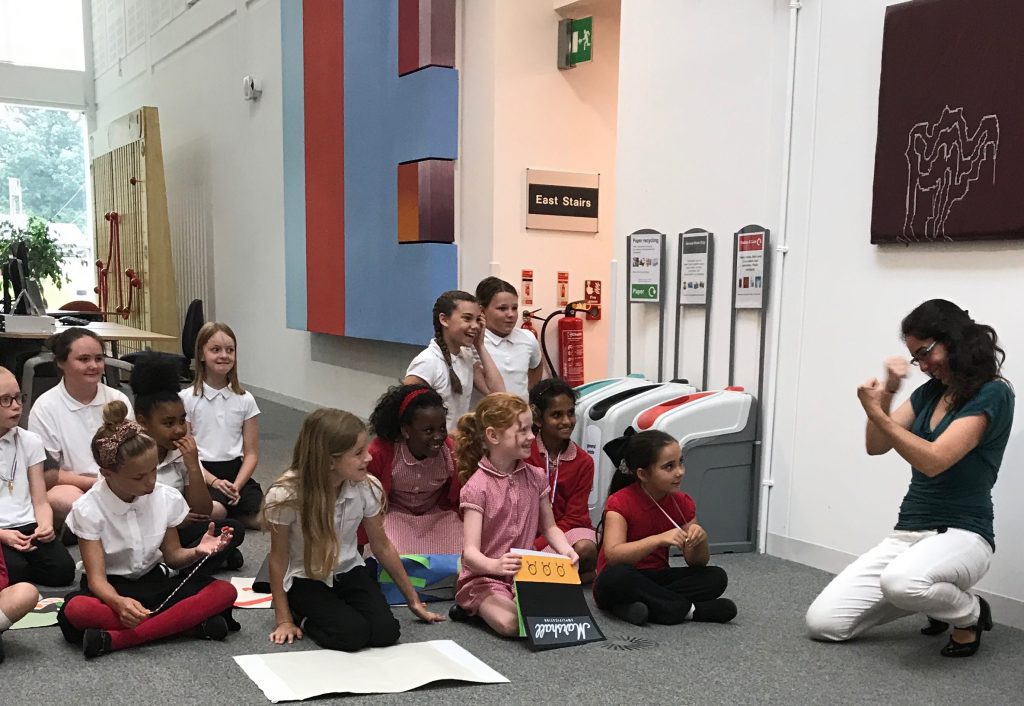

(Michelle Bachler presenting OpenBlockchain research – copyright The Knowledge Media Institute at the Open University)

It was a novel experience working with a translator as I presented, and took some of the focus off me, which was wonderful!

Professor Soulla Louca from the University of Nicosia gave a presentation about ‘Digital Certificates for Academic and professional history’. The university of Nicosia were the first University to offer a Cryptocurrency course and later the first to offer a Digital Currency Degree. They were also the first to write academic certificates to the Blockchain back in 2014, using Bitcoin and Merkle tree root hashes for issuing batches of certificates in a cost efficient but verifiable way. More information about their blockchain based certificate system can be found on their GitHub page.

(Professor Soulla Louca from the University of Nicosia – kind permission given to use this photo – copyright The Knowledge Media Institute at the Open University

Denise Parfenov from Data Management Hub and Open Knowledge Ireland gave an interesting presentation about ‘Data, Policies and Algorithms’. The Data Management Hub (DaMaHub) believe distributed web technologies are well suited ‘to meeting the challenges of creating a network infrastructure which natively supports research data which is findable, accessible, interoperable, reusable’ (FAIR). You can find out more about their aims and vision from their website and their GitHub page.

(Denis Parfenov from Data Management Hub – kind permission given to use this photo – copyright The Knowledge Media Institute at the Open University)

There was also a remote presentation on the second Saturday from Dr Sönke Bartling, Founder of Blockchain for Science, who talked about ‘Blockchain and Cryptoeconomy for Science’.

There where also eight Japanese speaking presentations over the course of the Hackathon. One was from the OpenID Foundation, Japan and one from the NEM Foundation. NEM, I discovered, is a popular blockchain service provider in Japan and was used by several of the Hackathon teams for their competition entries.

There was also what looked like a very interesting presentation from the BlockBase CEO, Taiju Sanagi about Blockchain based Identity systems and ERC725. Taiju Sanagi is also a member of the ERC-725 Alliance. It did make me wish I could speak Japanese, which happened a lot of the time I was at the event.

(Sanagi Taiju, CEO BlockBase Inc.- kind permission given to use this photo – copyright The Knowledge Media Institute at the Open University)

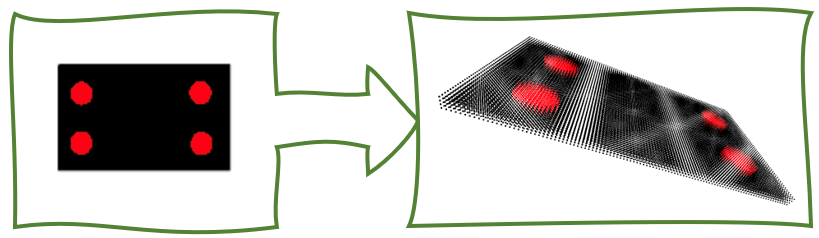

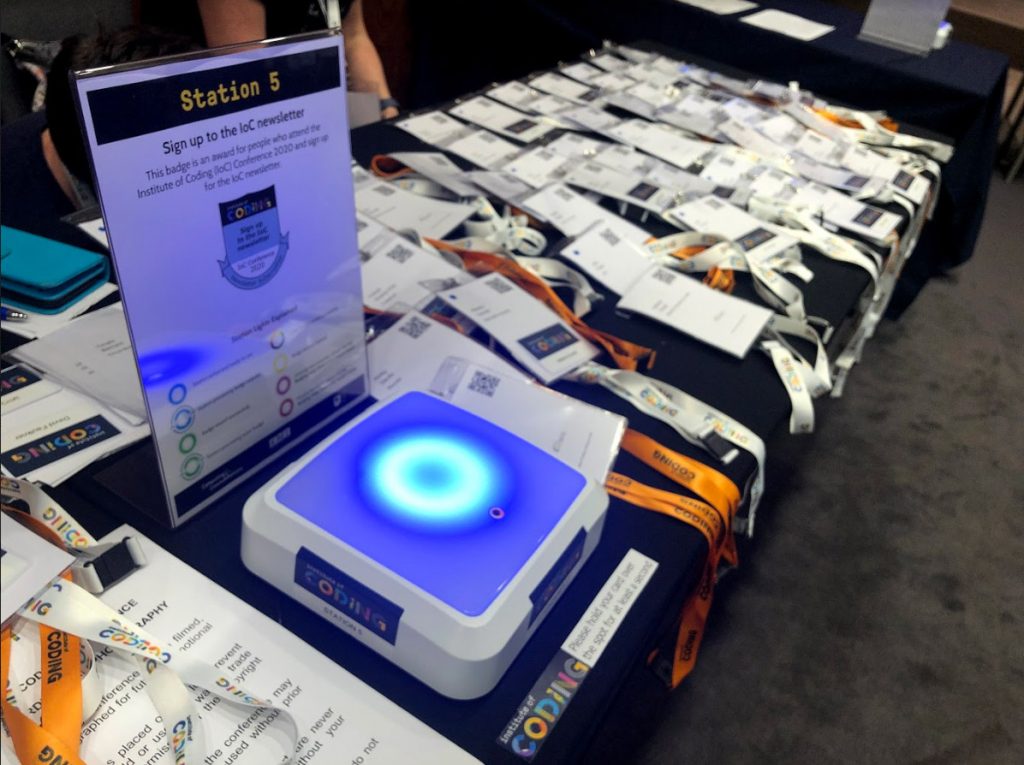

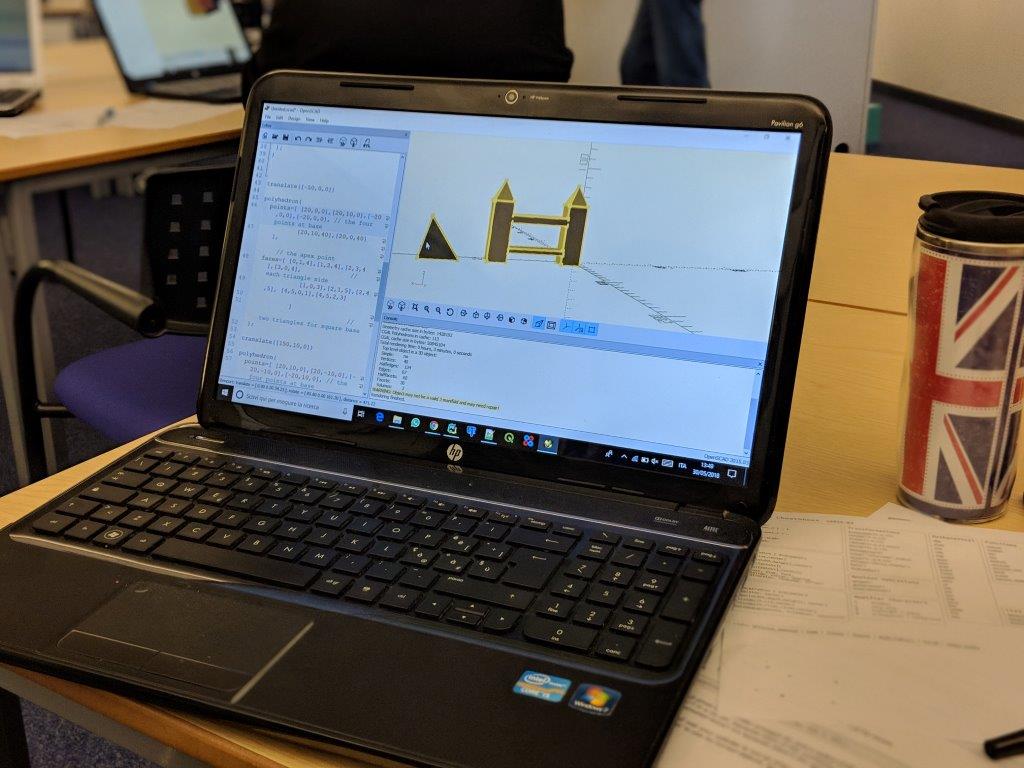

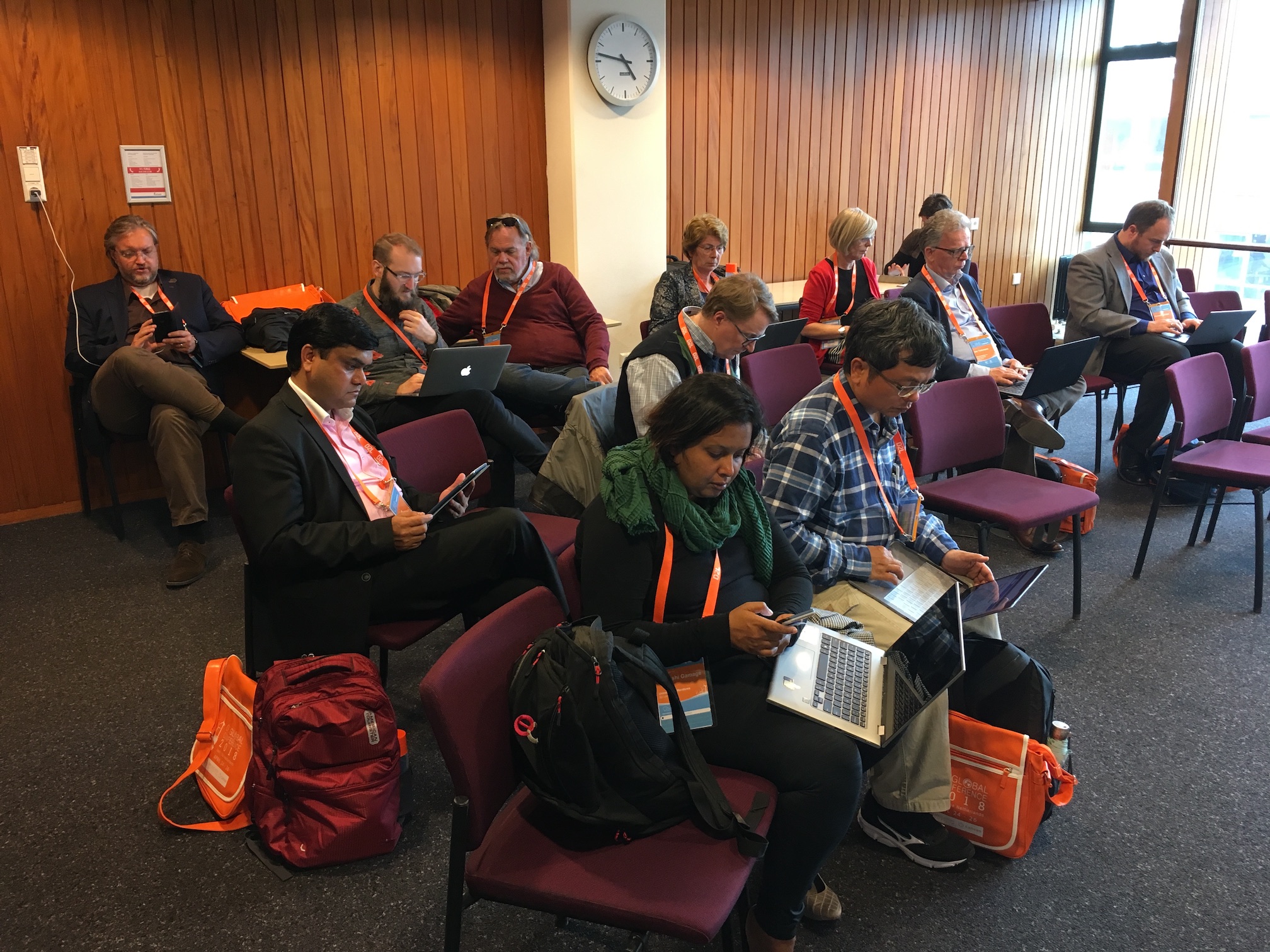

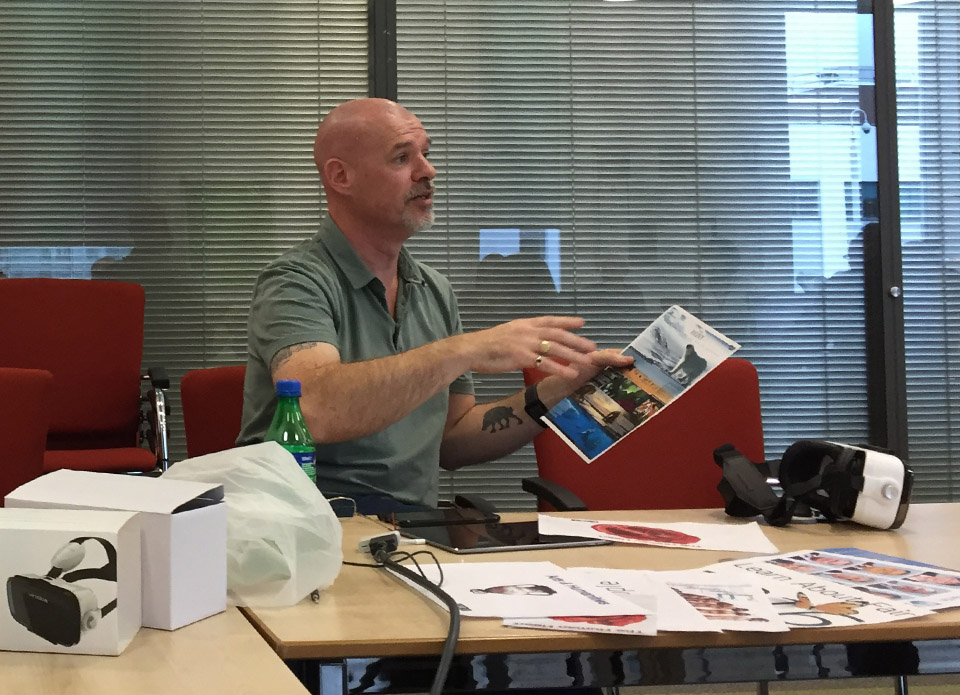

On the second Saturday, I gave a second presentation and a follow-on workshop on putting certificates on the Blockchain, This was a more technical talk and gave an overview of the landscape of certificates on the blockchain and our research in it. It then gave the attendees a chance to get hands-on and try creating some badges and putting them on our blockchain in various ways, for themselves. We created three separate Open Badges for them to earn while completing the workshop tasks.

On the second Saturday, I gave a second presentation and a follow-on workshop on putting certificates on the Blockchain, This was a more technical talk and gave an overview of the landscape of certificates on the blockchain and our research in it. It then gave the attendees a chance to get hands-on and try creating some badges and putting them on our blockchain in various ways, for themselves. We created three separate Open Badges for them to earn while completing the workshop tasks.

About 20 people attended, which I thought was quite good considering most were manically hacking away downstairs working on the final coding of their entries for the competition, which were being judged the following day.

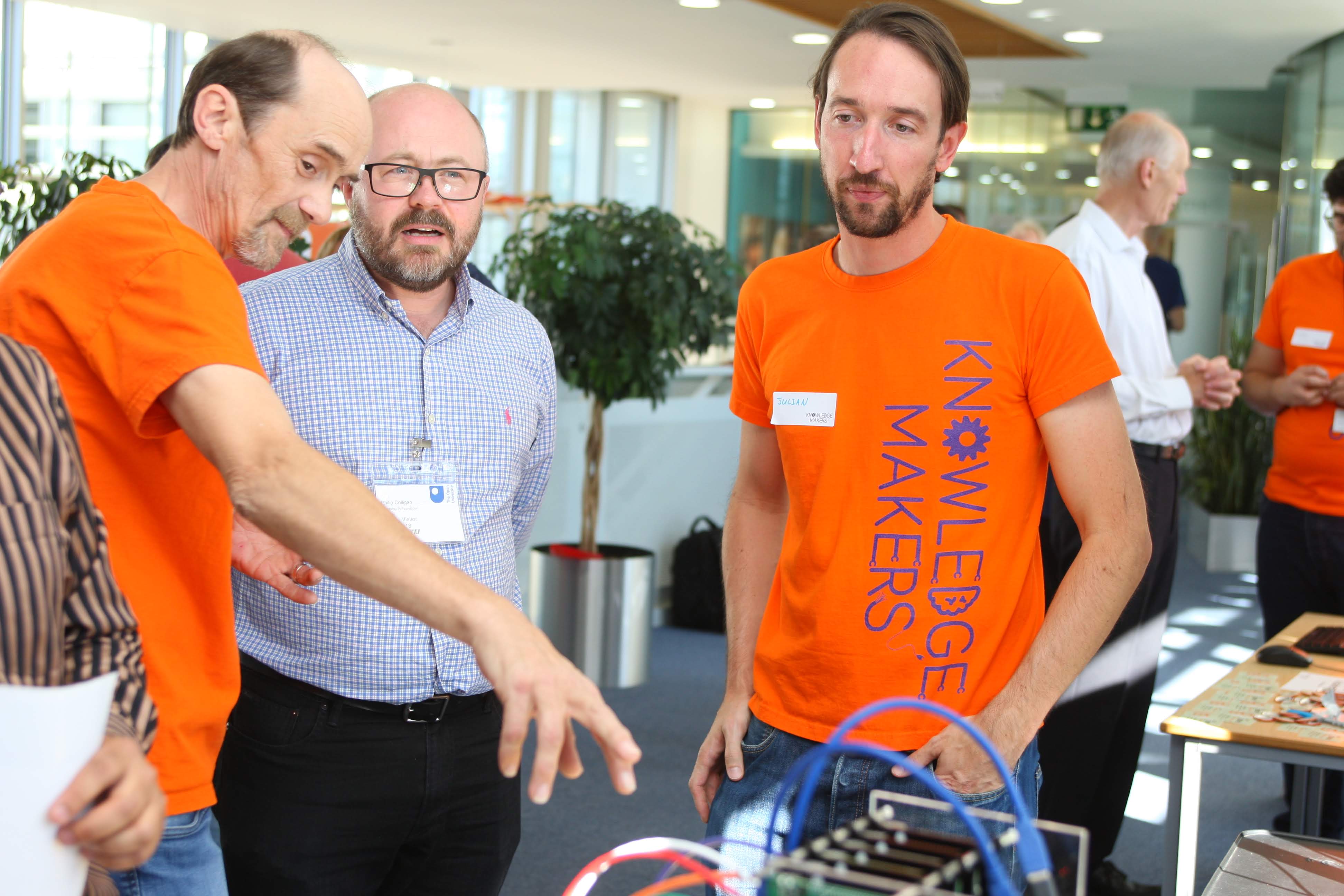

(Some of the Hackathon teams working away – copyright The Knowledge Media Institute at the Open University)

The workshop seemed to go down well though. Several people came to talk to me about it. I just want to thank Kevin Quick and Jon Linney for all their help preparing the workshop website.

The final day of the Hackathon saw the Judging. Somehow during the morning, the teams where whittled down from 22 to 12. The final 12 teams then presented their projects in the afternoon for Judging. There was a panel of 12 Judges with members from tech, industry and education. The jury President was Masanori Kusunoki the CTO of Japan Digital Design.

Unfortunately, taking photos of the presentations was not permitted, and they were all given in Japanese. However, luckily for Denis and I, Chris DAI, the CEO of LONGHASH Japan, who were one of the organizers for the event, was able to sit with us through some of the presentations and give us an idea of what was going on. Otherwise we would have been totally lost. Thank you, Chris, for the translation and for your company providing cool LONGHASH stickers, one of which is now on my laptop!

Unfortunately, taking photos of the presentations was not permitted, and they were all given in Japanese. However, luckily for Denis and I, Chris DAI, the CEO of LONGHASH Japan, who were one of the organizers for the event, was able to sit with us through some of the presentations and give us an idea of what was going on. Otherwise we would have been totally lost. Thank you, Chris, for the translation and for your company providing cool LONGHASH stickers, one of which is now on my laptop!

After the presentations came the awards. There were official awards in various categories as well as some separate unofficial prizes from individual judges. BlockBase, who I mentioned before, entered one of the teams called DigiD. They worked in collaboration with Digital Hollywood University for the Hackathon and created a system that collectively manages a portfolio of degrees and enrolments. They won 3 awards / judges prizes in the end, including the Industrial Technology and Environment Bureau Award.

(DigiD Team – Copyright Ministry of Economy, Trade and Industry, Japan – press release)

You can see more details about the winners on the official Ministry press release page. After the Awards were given out there was a small post Hackathon Pizza party.

(General shot of the party. Copyright, The Knowledge Media Institute at the Open University)

Most of the developers had worked through the night finishing their entries and where tired by this stage. So, it was a subdued gathering, with a bit of networking. I did exchange a lot of business cards and very much enjoyed not shaking hands with anyone, but just bowing slightly and smiling widely.

Because I was there for a week, I got a chance, in between preparing for the workshop, to see some of Tokyo.

(The East Gardens of the Imperial Palace, Tokyo. Copyright Michelle Bachler)

I visited many of the gardens in Tokyo, over the week. Navigating around on the train system was really easy. Transport was always on time and everywhere was so clean. There were also hardly any cars in the city, so somehow it was quieter than I would have expected a capital city to be.

Like the locals, I became a little obsessed with taking pictures of the early cherry blossom that had just started to come out on a few trees in the parks. You could tell from the number of trees in bud that in a few weeks it would be a breath-taking spectacle.

(Cherry blossom, Shinjuku Gyoen National Garden, Tokyo. Copyright Michelle Bachler)

I became more confident with the transport as the week progressed, so on the Friday I thought I would take a longer train ride out of the city and I visited the coastal town of Kamakura. It had been the political centre of medieval Japan and it has dozens of Buddist Zen temples and Shinto shrines.

Unfortunately, the day I picked for my trip out of the city, there was quite heavy snow all morning and into the afternoon. Luckily, I had packed my duvet coat, so it was fine. I also found the snow falling at the temples beautiful to watch. It made the whole experience more magical and slightly surreal.

I managed to fit in 5 temples on my day out and have tea in tea houses at two of them. I took loads of pictures and here are just a few of my favourites to get a flavour:

(Tea at Jomyoji Zen Temple tea house, watching the snow falling. Copyright Michelle Bachler)

(Tea at Hokokuji Temple tea house, contemplating the bamboo forest. Copyright Michelle Bachler)

(Heavy snow at Tsurugaoka Hachiman-gū Shinto Shrine. Copyright Michelle Bachler)

(Great Buddha at Kōtoku-in Buddist Temple. Copyright Michelle Bachler)

(Hasendera Buddist Temple. Copyright Michelle Bachler)

All, in all, it was a fabulous week and left me feeling like I had hardly scratched the surface of this magical country. I will be back!

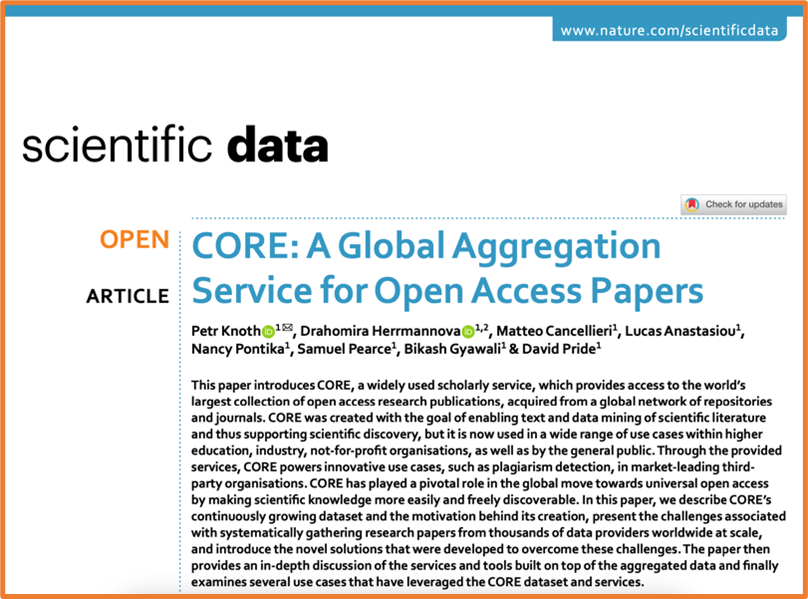

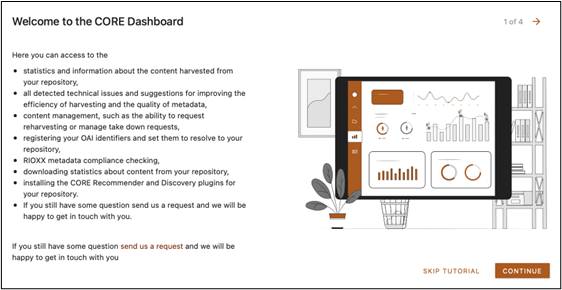

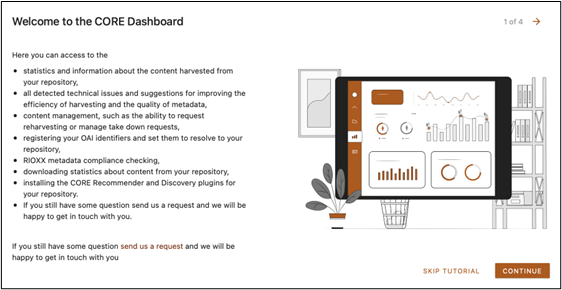

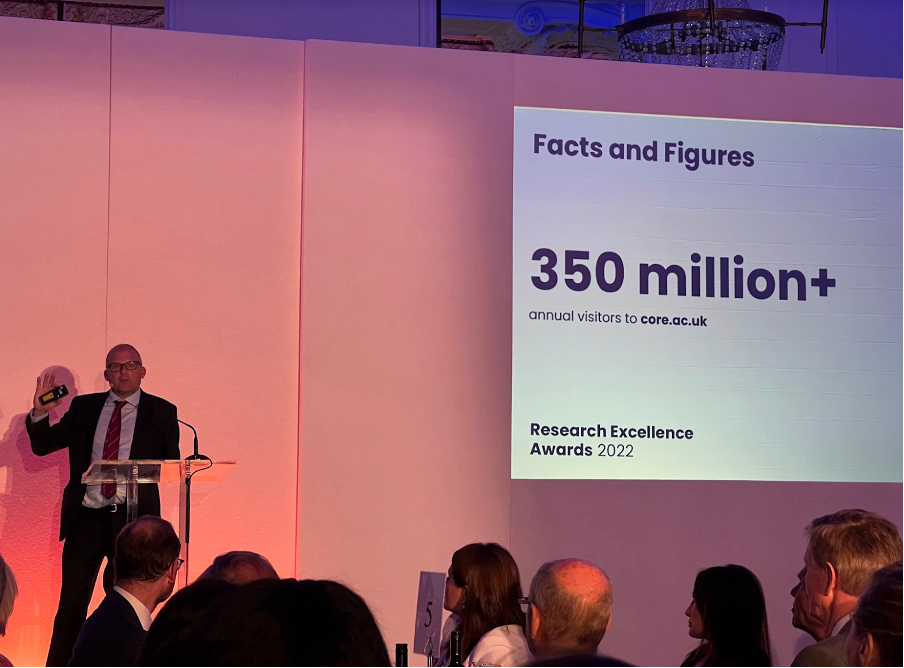

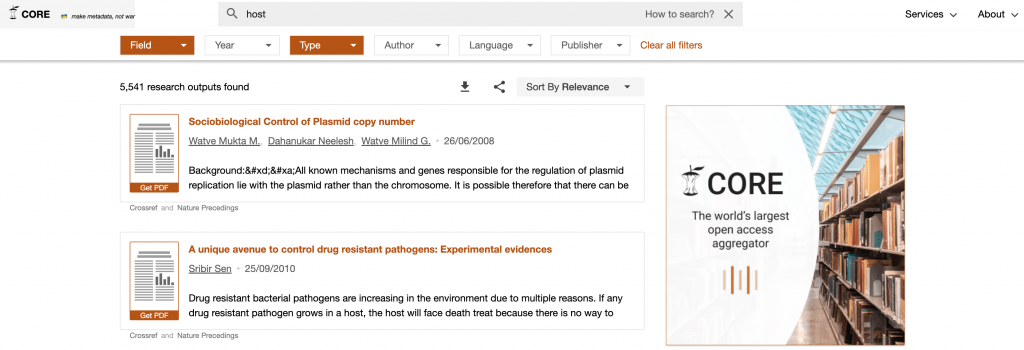

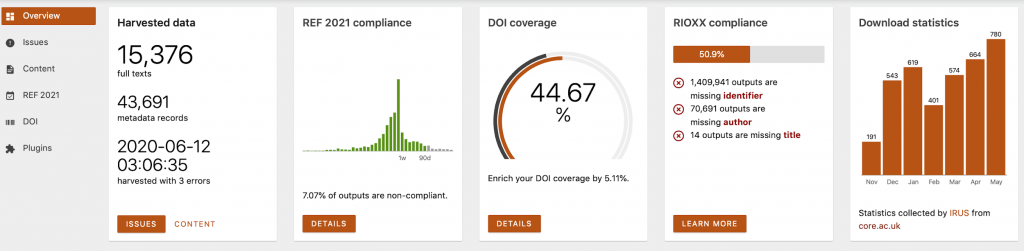

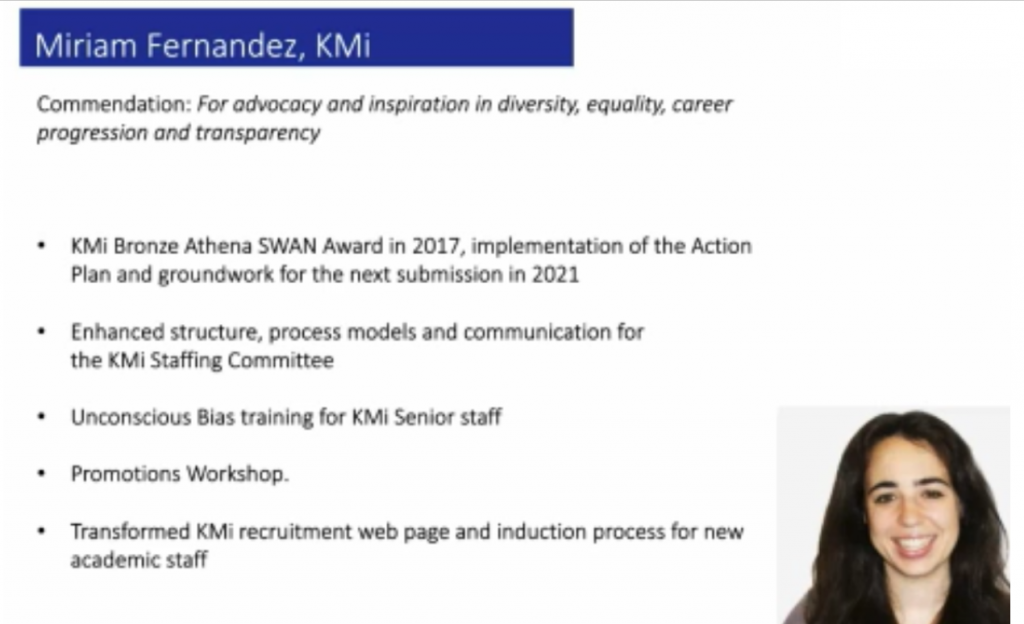

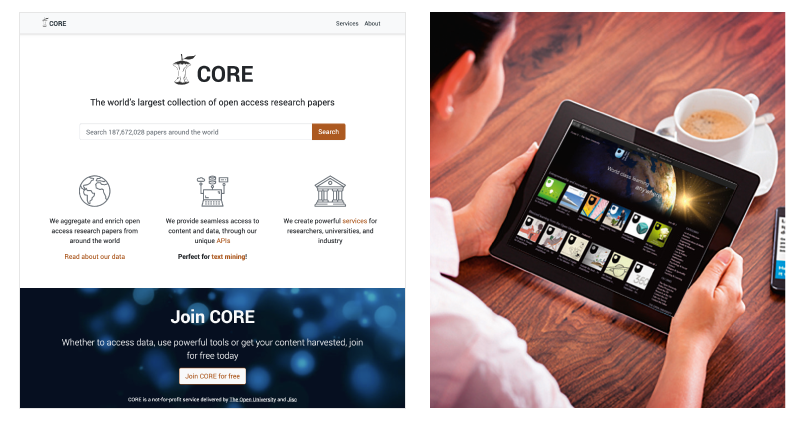

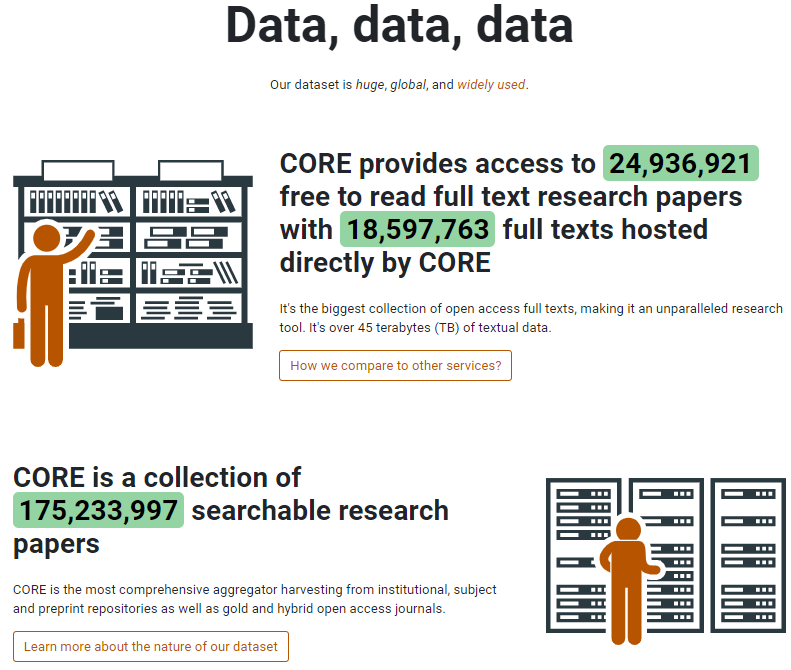

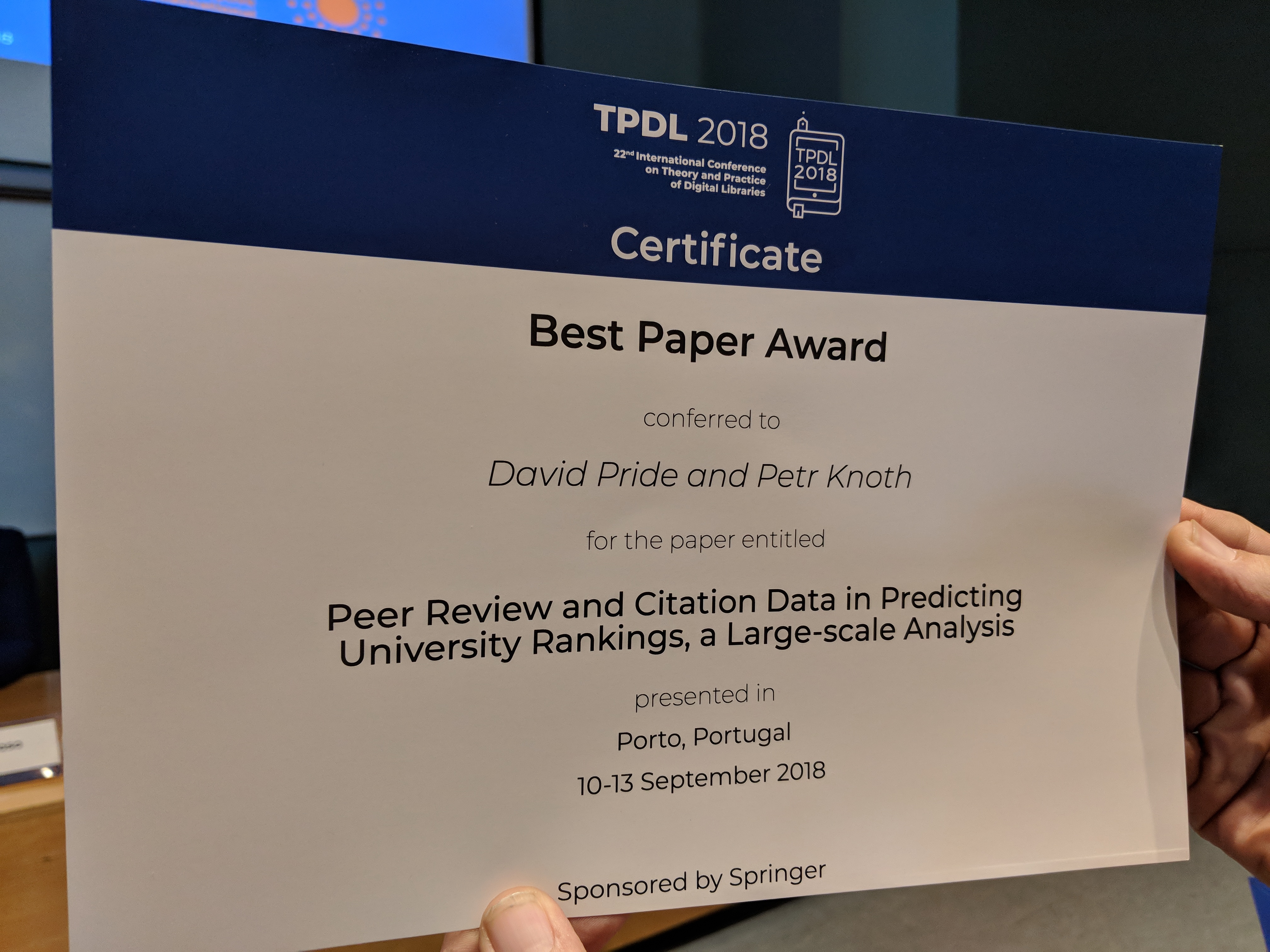

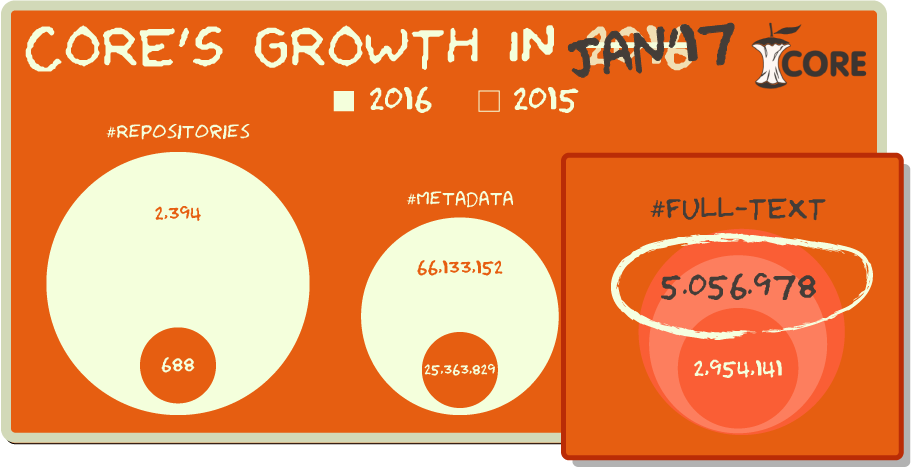

Dr. Petr Knoth commented: "I am delighted to win this award and would like to thank everyone who contributed to the development of CORE since its start in 2010, including our key partner Jisc as well as other funders, our users and commercial customers and our fantastic and talented

Dr. Petr Knoth commented: "I am delighted to win this award and would like to thank everyone who contributed to the development of CORE since its start in 2010, including our key partner Jisc as well as other funders, our users and commercial customers and our fantastic and talented

On the second Saturday, I gave a second presentation and a follow-on workshop on putting certificates on the Blockchain, This was a more technical talk and gave an overview of the landscape of certificates on the blockchain and our research in it. It then gave the attendees a chance to get hands-on and try creating some badges and putting them on our blockchain in various ways, for themselves. We created three separate Open Badges for them to earn while completing the workshop tasks.

On the second Saturday, I gave a second presentation and a follow-on workshop on putting certificates on the Blockchain, This was a more technical talk and gave an overview of the landscape of certificates on the blockchain and our research in it. It then gave the attendees a chance to get hands-on and try creating some badges and putting them on our blockchain in various ways, for themselves. We created three separate Open Badges for them to earn while completing the workshop tasks.

Unfortunately, taking photos of the presentations was not permitted, and they were all given in Japanese. However, luckily for Denis and I, Chris DAI, the CEO of

Unfortunately, taking photos of the presentations was not permitted, and they were all given in Japanese. However, luckily for Denis and I, Chris DAI, the CEO of

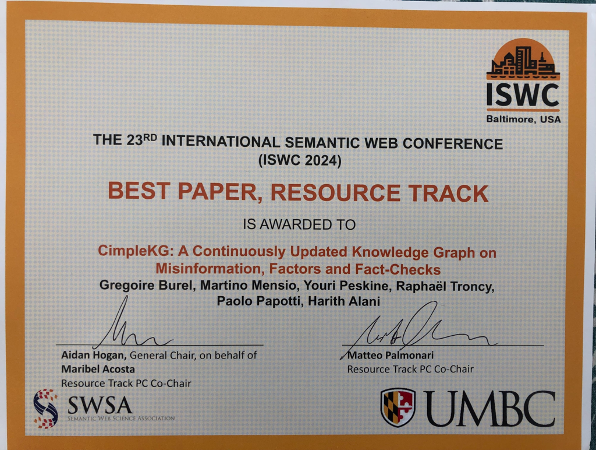

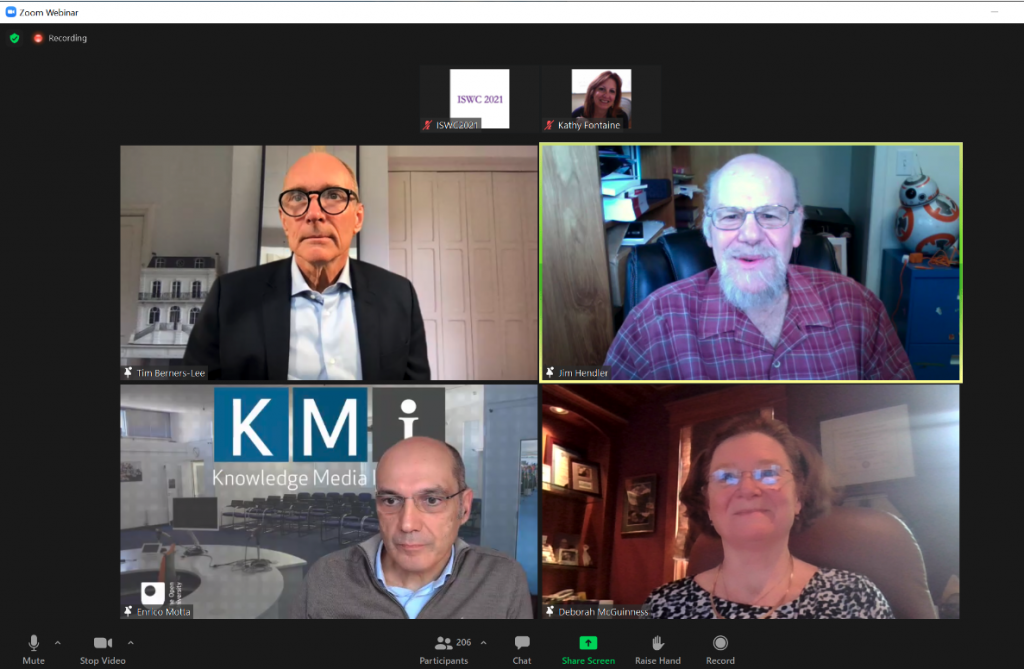

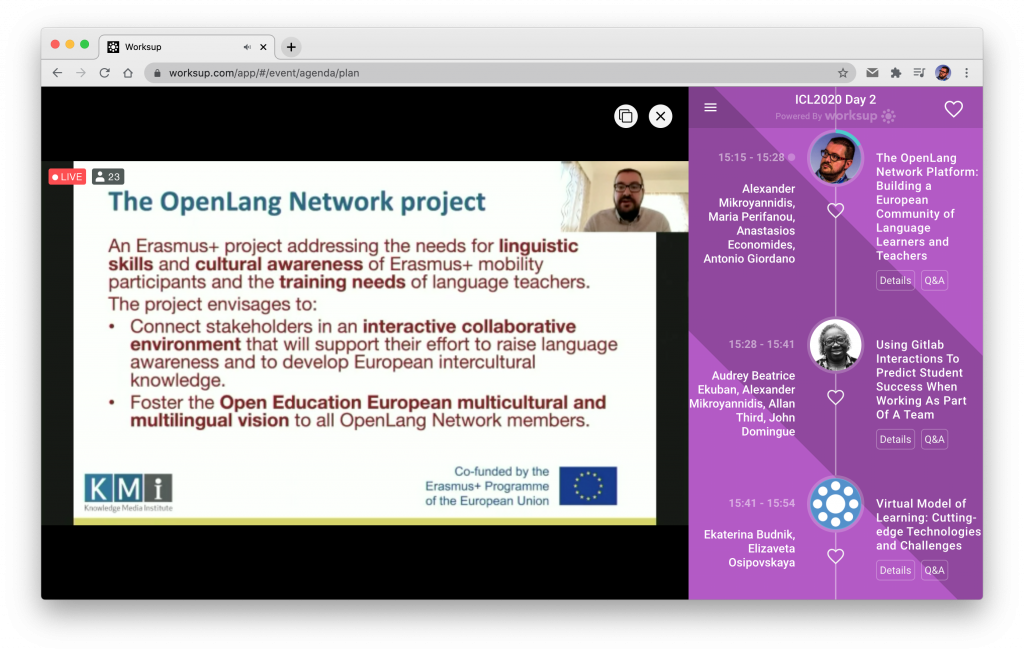

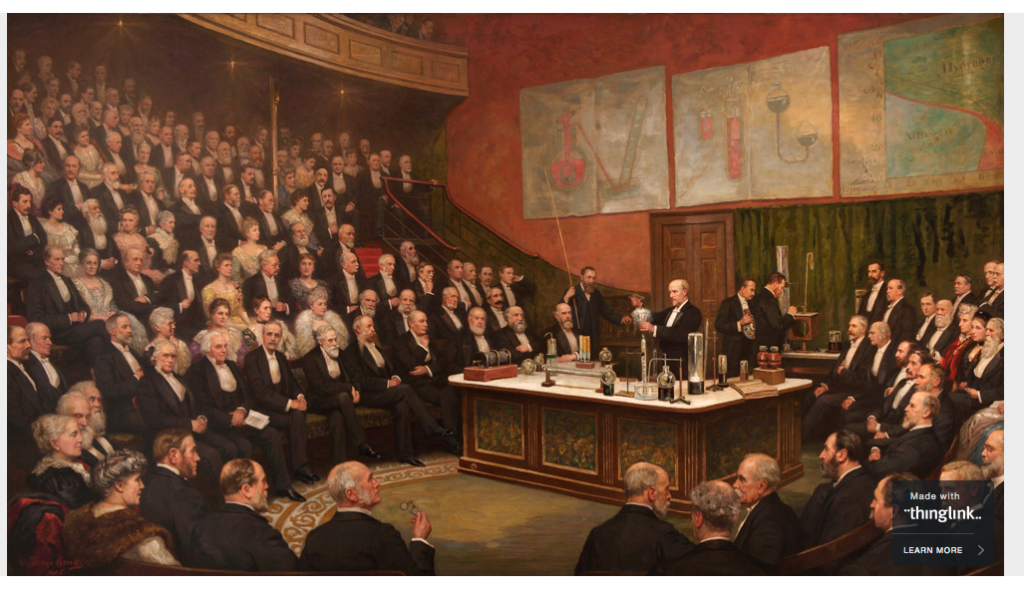

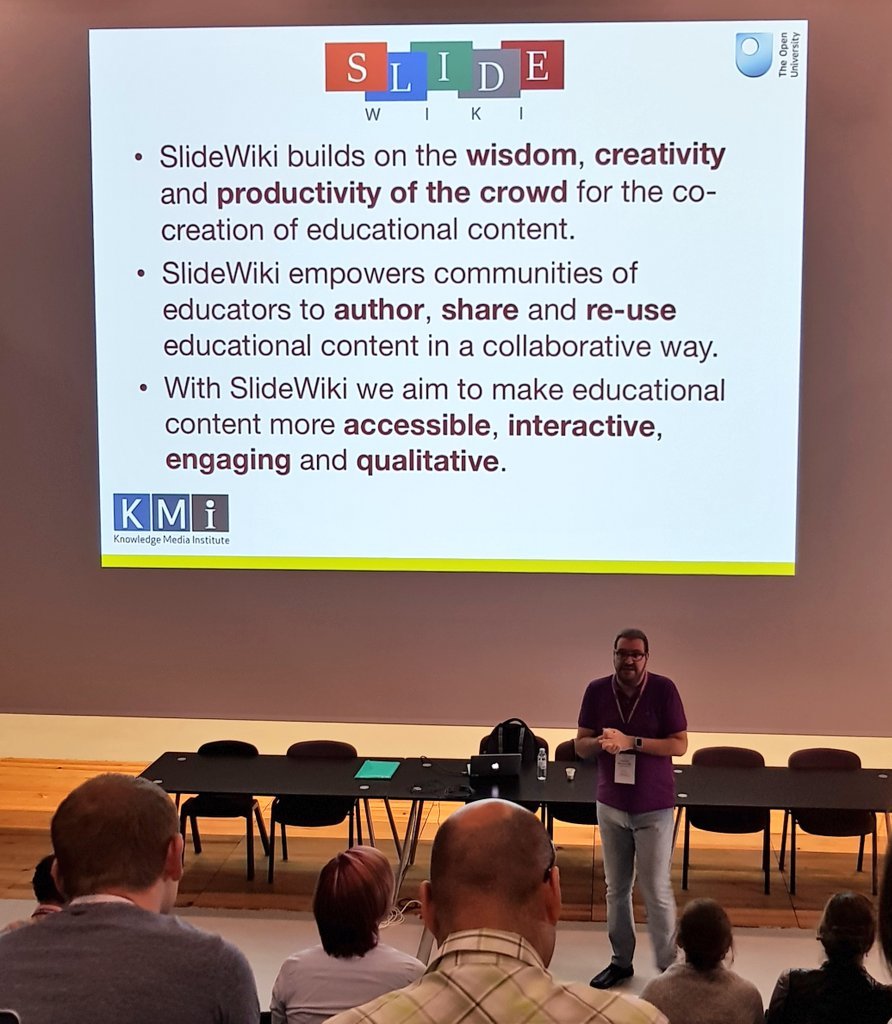

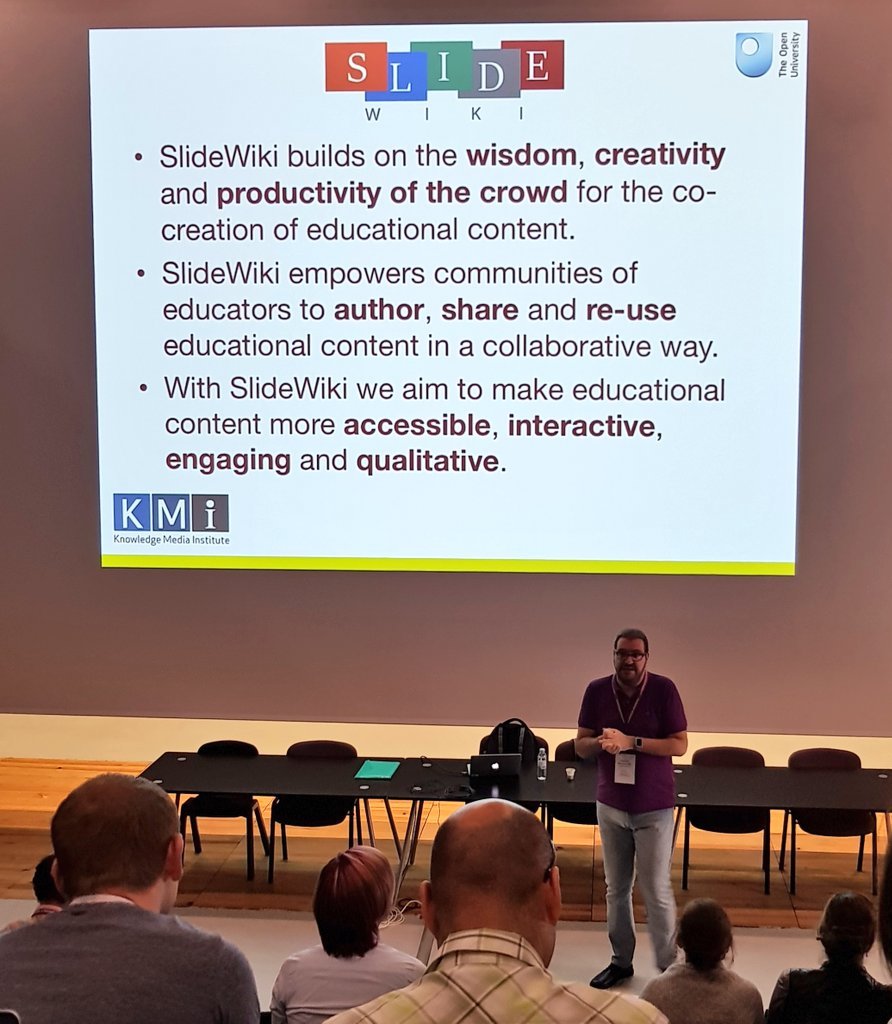

Last but not least, The Knowledge Media Instruments band took part in the legendary ISWC Jam Session promoting the cultural environment of KMi.

Last but not least, The Knowledge Media Instruments band took part in the legendary ISWC Jam Session promoting the cultural environment of KMi.

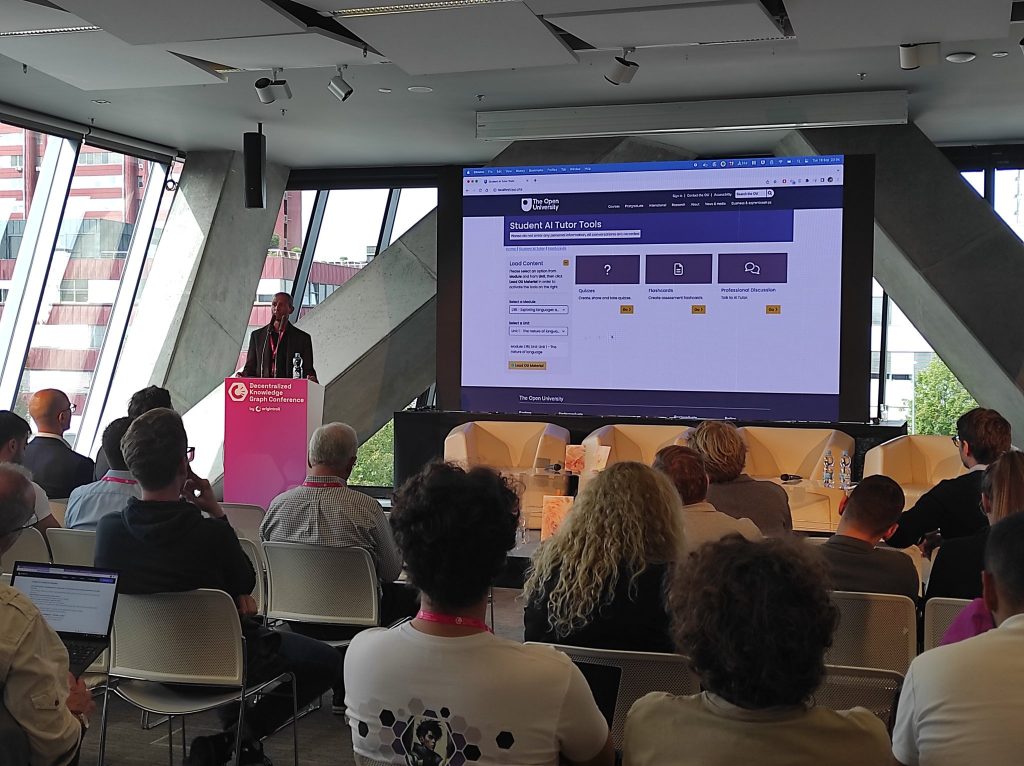

ar about the Ethereum Name Service (

ar about the Ethereum Name Service (

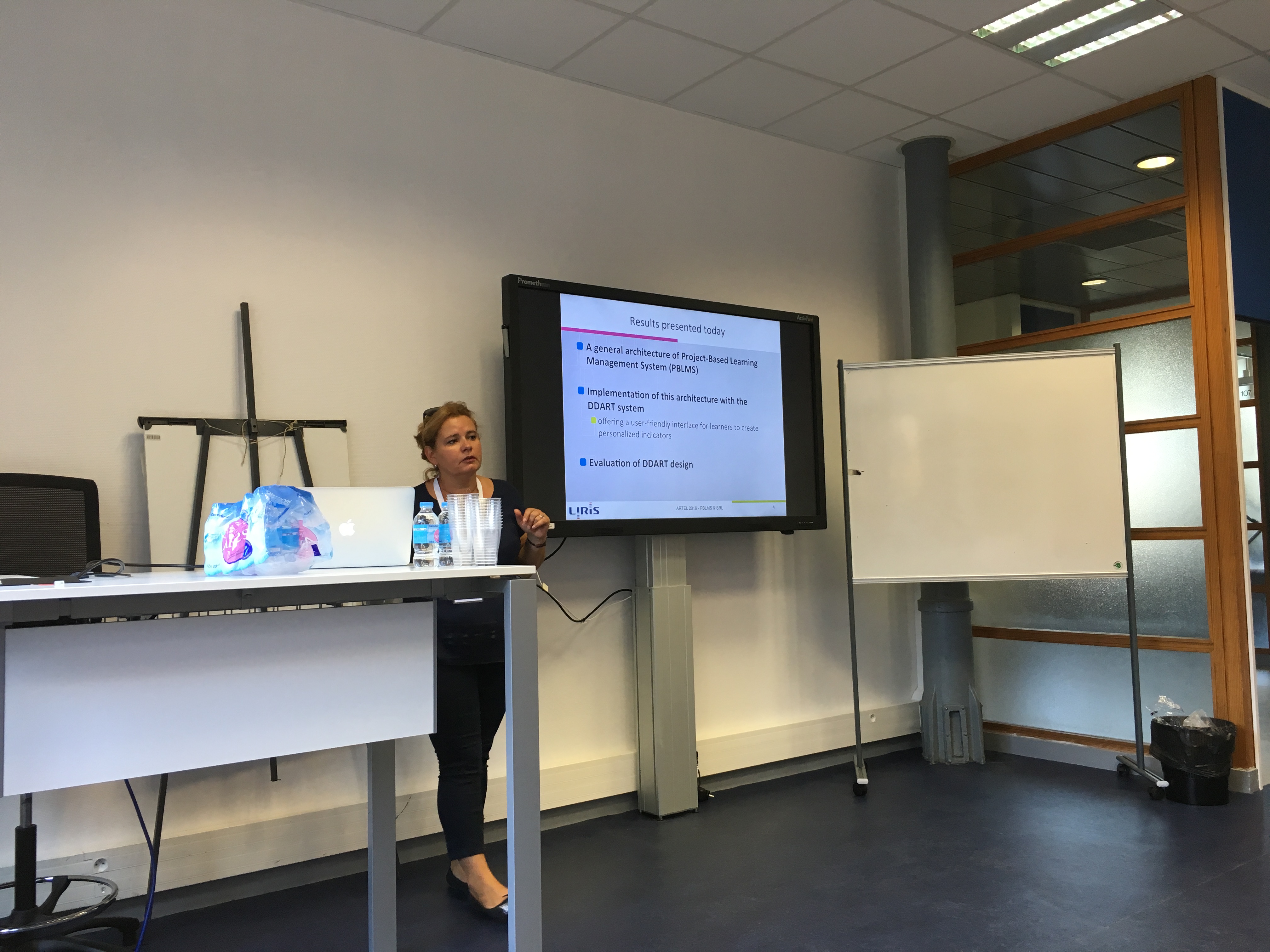

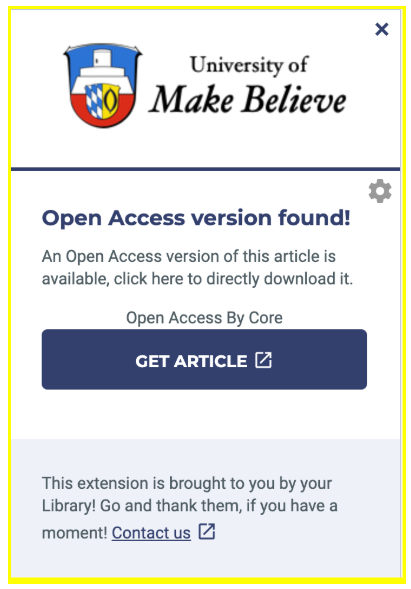

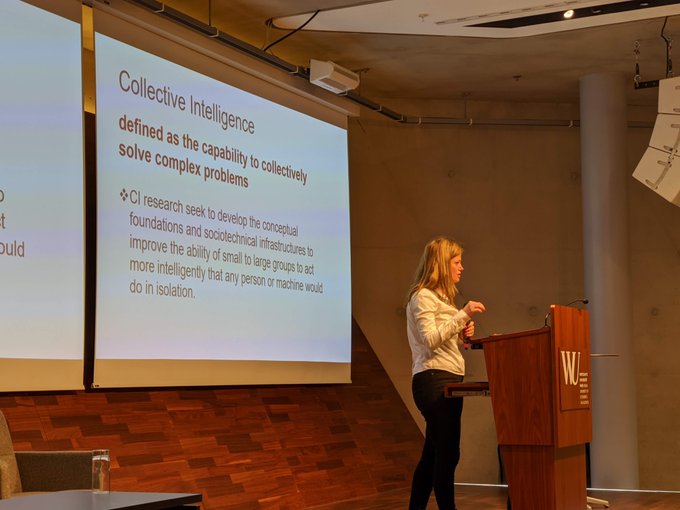

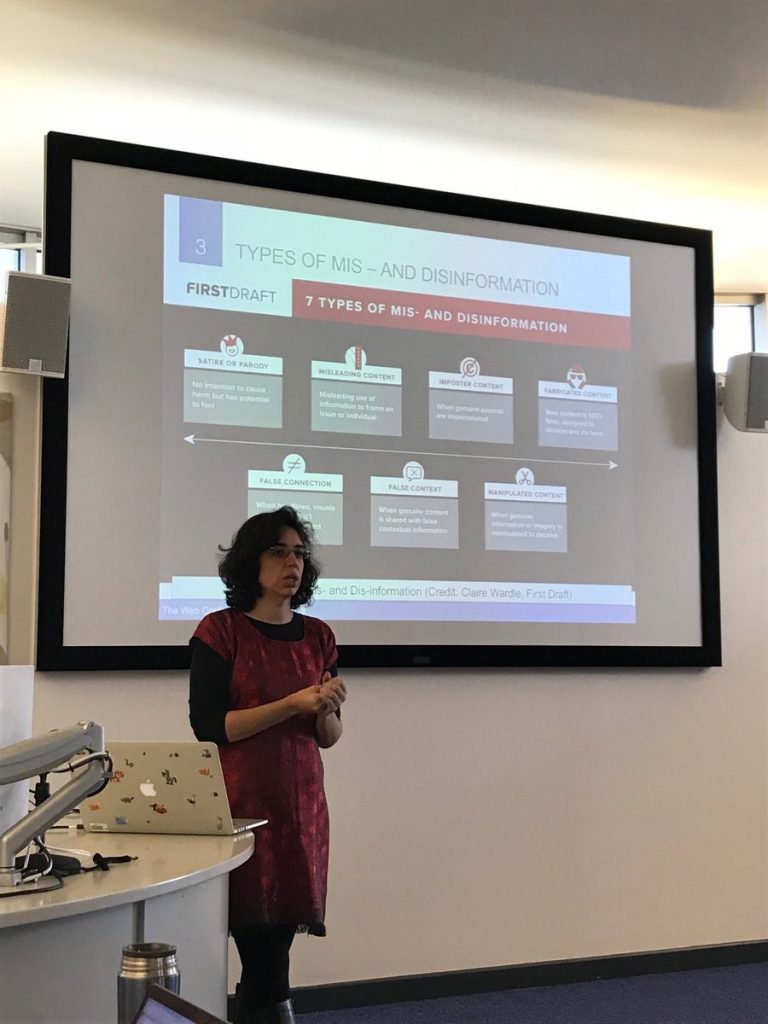

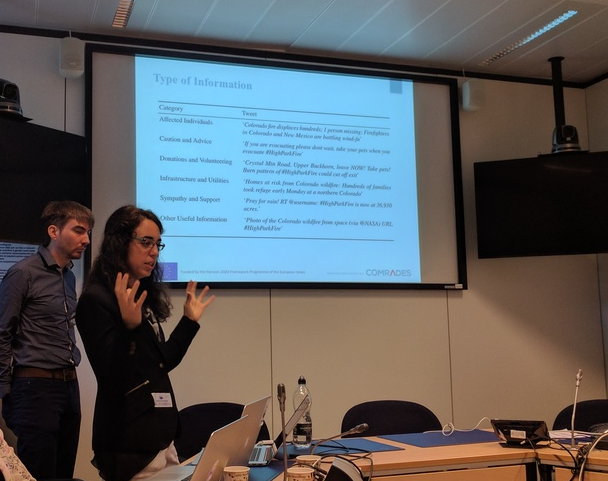

Leila Topic from

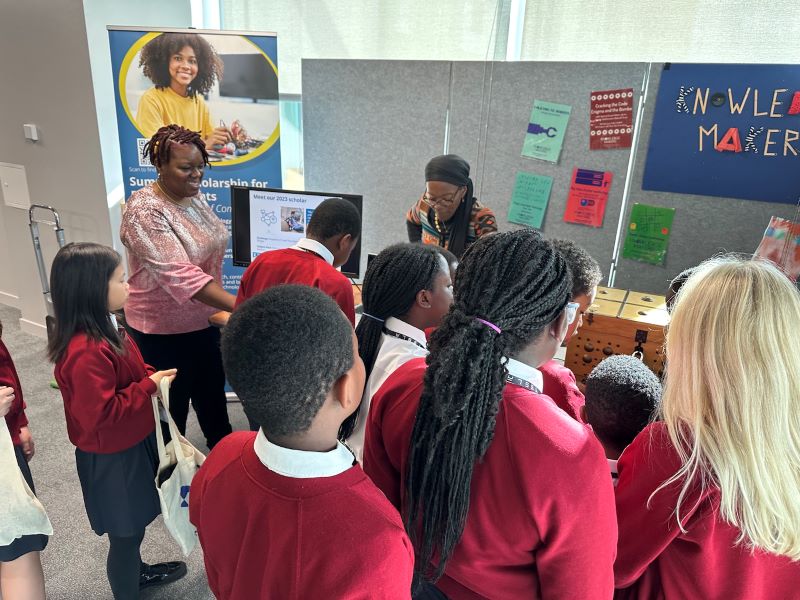

Leila Topic from  One of the outcomes of the ePIC conference was the

One of the outcomes of the ePIC conference was the